|

There are a variety of methods for classifying objects, with some more sophisticated than others. In general, RSAC prefers classification and regression tree (CART)–type algorithms because they are robust, relatively easy to use, and reliably produce good results. Therefore, this article will focus on CART-based methods.

Content Article Count: 11 News Article Count: 52Products Article Count: 10MARS Article Count: 6TreeNet Article Count: 5Resources Article Count: 0Introductory Videos Article Count: 7

Learn the basics of SPM® in one-hour video overviews. This is a great place to start if you're new to decision trees. Video presentations include introductions to CART®, MARS®, TreeNet®, Random Forests®, and the basics of data mining.

Training Videos Article Count: 5

Salford Systems offers complete comprehensive training on each product within the SPM® Salford Predictive Modeler software suite. These training courses are broken down into one hour increments for better understanding and comprehension of the theory and application of the software. If you're seriously using the software and want to know the theory behind each tool, these training courses are recommended. Personalized training is available for purchase. If you're interested in scheduling on-site or customized online training for team of analysts please contact Salford Systems at info (at) salford-systems (dot) com, or phone 619-543-8880.

Webinars & Tutorials Article Count: 0

Watch hands-on webinars and tutorials to better your understanding of the SPM® machine learning and predictive analytics software.

How-To Article Count: 14

Access to a plethora of video documentation to help you get started and understand the key concepts behind each product's functionality.

Tips and Tricks Article Count: 8

After you have mastered the data mining basics, explore new ways to squeeze out extra insight from your models and leverage the underlying methodology of Salford Systems' data mining software.

Competitive Advantage Article Count: 9

What makes Salford Systems' data mining tools unique? These videos explore unique combinations of our data mining algorithms that will help you gain insight and predictive power.

Expert User Article Count: 1

This series of videos is designed for the SPM power user. If you are using the Ultra, or even the Pro Ex version of SPM, you may benefit from these advanced data mining combinations and commands.

Software Demonstrations Article Count: 1

The videos contains the demonstrations of the techniques using the SPM® Software Suite. Software Featured in the Videos: SPM® Software Suite, CART® Software, Random Forests® Software, TreeNet® Software, MARS® Software, RuleLearner® Software, ISLE© Software, Generalized PathSeeker™ Software.

Upcoming Events Article Count: 0Training Article Count: 3Support Article Count: 2Frequently Asked Questions Article Count: 0SPM® Article Count: 15

Frequently Asked Questions for The SPM® Software Suite

The Salford Predictive Modeler® software suite is a highly accurate and ultra–fast platform for developing predictive, descriptive, and analytical models from databases of any size, complexity, or organization. The SPM® software suite is in use in major organizations and by leaders in fraud detection, credit risk, insurance, direct marketing, online analytics, manufacturing, pharmaceuticals, logistics, natural resources, auditing, security, national defense, and more. Data mining technologies within the SPM® software suite span classification, regression, survival analysis, missing value analysis, and clustering/segmentation to cover all aspects of your data mining projects. The SPM® software suite's algorithms are considered to be essential in data mining circles.

The SPM® software suite's automation accelerates the process of model building by conducting substantial portions of the model exploration and refinement process for the analyst. While the analyst is always in full control we optionally anticipate the analysts' next best steps and package a complete set of results from alternative modeling strategies for easy review.

CART® Article Count: 24

Frequently Asked Questions for CART®

CART® is the ultimate classification tree that has revolutionized the entire field of advanced analytics and inaugurated the current era of data mining. CART, which is continually being improved, is the most important tool in modern data mining methods. Designed for both non-technical and technical users, CART can quickly reveal important data relationships that could remain hidden using other analytical tools.

CART is based on landmark mathematical theory introduced in 1984 by four world–renowned statisticians at Stanford University and the University of California at Berkeley. Salford Systems' implementation of CART is the only decision tree software embodying the original proprietary code. The CART creators continue to collaborate with Salford Systems to enhance CART with proprietary advances.

MARS® Article Count: 11

MARS® is ideal for users who prefer results in a form similar to traditional regression while capturing essential non–linearities and interactions. The MARS approach to regression modeling effectively uncovers important data patterns and relationships that are difficult, if not impossible, for other regression methods to reveal.

Conventional regression models typically fit straight lines to data. MARS approaches model construction more flexibly, allowing for bends, thresholds, and other departures from straight-line methods. MARS builds its model by piecing together a series of straight lines with each allowed its own slope. This permits MARS to trace out any pattern detected in the data.

The MARS model is designed to predict continuous numeric outcomes such as the average monthly bill of a mobile phone customer or the amount that a shopper is expected to spend in a web site visit. MARS is also capable of producing high quality probability models for a yes/no outcome. MARS performs variable selection, variable transformation, interaction detection, and self–testing, all automatically and at high speed.

TreeNet® Article Count: 12

TreeNet® is Salford's most flexible and powerful data mining tool, responsible for at least a dozen prizes in major data mining competitions since its introduction in 2002. The algorithm typically generates thousands of small decision trees built in a sequential error–correcting process to converge to an accurate model.

TreeNet models are usually complex and thus the software generates a number of special reports designed to extract the meaning of the model. Graphs produced by TreeNet software display the impact of any relevant predictor or pair of predictors on the target, thus revealing the underlying data structure.

TreeNet's robustness extends to data contaminated with erroneous target labels. This type of data error can be very challenging for conventional data mining methods and will be catastrophic for conventional boosting. In contrast, TreeNet is generally immune to such errors as it dynamically rejects training data points too much at variance with the existing model. In addition, TreeNet adds the advantage of a degree of accuracy usually not attainable by a single model or by ensembles such as bagging or conventional boosting. As opposed to neural networks, TreeNet is not sensitive to data errors and needs no time–consuming data preparation, preprocessing or imputation of missing values.

Random Forests® Article Count: 9

Random Forests® is a bagging tool that leverages the power of multiple alternative analyses, randomization strategies, and ensemble learning to produce accurate models, insightful variable importance ranking, and laser–sharp reporting on a record–by–record basis for deep data understanding. Its strengths are spotting outliers and anomalies in data, displaying proximity clusters, predicting future outcomes, identifying important predictors, discovering data patterns, replacing missing values with imputations, and providing insightful graphics.

Much of the insight provided by Random Forests is generated by methods applied after the trees are grown and include new technology for identifying clusters or segments in data as well as new methods for ranking the importance of variables. The method was developed by Leo Breiman and Adele Cutler of the University of California, Berkeley, and is licensed exclusively to Salford Systems. Ongoing research is being undertaken by Salford Systems in collaboration with Professor Adele Cutler, the surviving co–author of Random Forests.

Random Forests is a collection of many CART trees that are not influenced by each other when constructed. The sum of the predictions made from decision trees determines the overall prediction of the forest. This algorithm is best suited for the analysis of complex data structures embedded in small to moderate data sets containing less than 10,000 rows but potentially millions of columns.

SPM User's Guide Repository Article Count: 0Need Help With SPM? Article Count: 15

You've come to the right place! Below you will find a wide range of topic specific user guides for SPM. If you can't find the answer you need, our support team member are ready to help you find a solution.

Salford Systems Article Count: 9

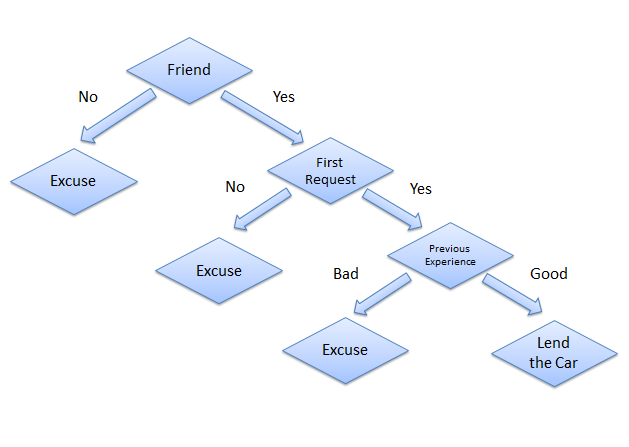

The CART algorithm is structured as a sequence of questions, the answers to which determine what the next question, if any should be. The result of these questions is a tree like structure where the ends are terminal nodes at which point there are no more questions. A simple example of a decision tree is as follows [Source: Wikipedia]:

The main elements of CART (and any decision tree algorithm) are:

In order to understand this better, let us consider the Iris dataset (source: UC-Irvine Machine Learning Repository http://archive.ics.uci.edu/ml/). The dataset consists of 5 variables and 151 records as shown below:

In this data set, 'Class' is the target variable while the other four variables are independent variables. In other words, the 'Class' is dependent on the values of the other four variables. We will use IBM SPSS Modeler v15 to build our tree. To do this, we attach the CART node to the data set. Next, we choose our options in building out our tree as follows:

On this screen, we pick the maximum tree depth, which is the most number of 'levels' we want in the decision tree. We also choose the option of 'Pruning' the tree which is used to avoid over-fitting. More about pruning in a different blog post.

On this screen, we choose stopping rules, which determine when further splitting of a node stops or when further splitting is not possible. In addition to maximum tree depth discussed above, stopping rules typically include reaching a certain minimum number of cases in a node, reaching a maximum number of nodes in the tree, etc. Conditions under which further splitting is impossible include when [Source: Handbook of Statistical Analysis and Data Mining Applications by Nisbet et al]:

Next we run the CART node and examine the results. We first look at Predictor Importance, which represents the most important variables used in splitting the tree:

From the chart above, we note that the most important predictor (by a long distance) is the length of the Petal followed by the width of the Petal.

A scatter plot of the data by plotting Petal length by Petal width also reflects the predictor importance:

This should also be reflected in the decision tree generated by the CART. Let us examine this next:

As can be seen, the first node is split based on our most important predictor, the length of the Petal. The question posed is 'Is the length of the petal greater than 2.45 cms?'. If not, then the class in which the Iris falls is 'setosa'. If yes, then the class could be either 'versicolor' or 'virginica'. Since we have completely classified 'setosa' in Node 1, that becomes a terminal node and no additional questions are posed there. However, we still need to Node 2 still needs to be broken down to separate out 'versicolor' and 'virginica'. Therefore, the next question needs to be posed which is based on our second most important predictor, the width of the Petal.

As expected, in this case, the question relates to the width of the Petal. From the nodes, we can see that by asking the second question, the decision tree has almost completely split the data separately into 'versicolor' and 'virginica'. We can continue splitting them further until there is no overlap between classes in each node; however, for the purposes of this post, we will stop our decision tree here. We attach an Analysis node to see the overall accuracy of our predictions:

From the analysis, we can see that the CART algorithm has classified 'setosa' and 'virginica' accurately in all cases and accurately classified 'versicolor' in 47 of the 50 cases giving us an overall accuracy of 97.35%.

Some useful features and advantages of CART [adapted from: Handbook of Statistical Analysis and Data Mining Applications by Nisbet et al]:

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

March 2023

Categories |

RSS Feed

RSS Feed